Claude Code Source Code Leak: What It Means for Cloud Security and AI Development Claude Code Source Code Leak: What It Means for Cloud Security and AI Development In the rapidly evolving landscape of artificial intelligence, a significant security event has sent shockwaves through the developer community. Someone recently leaked …

CVE-2025-55182, EU Cloud Breach, and Google Vertex AI Flaw: This Week in Cloud Security

CVE-2025-55182, EU Cloud Breach, and Google Vertex AI Flaw: This Week in Cloud Security The cloud security landscape continues to evolve at breakneck speed. This week brought a wave of significant developments that should be on every security team’s radar — from a massive Next.js vulnerability actively exploited across hundreds …

How to Secure AI Agents With Workload Identity Federation: A Zero-Trust Rollout Plan for Cloud Teams

How to Secure AI Agents With Workload Identity Federation: A Zero-Trust Rollout Plan for Cloud Teams AI agents are starting to look less like chat features and more like cloud workloads with decision-making power. They call APIs, pull from data stores, trigger pipelines, and in some cases take write actions …

What the Stryker Intune Wipe Teaches Cloud Teams About Identity Governance

One compromised admin account should not be enough to wipe a global company’s operating surface. But that is exactly why the March 2026 Stryker incident matters far beyond one manufacturer or one Microsoft tenant. According to public reporting and CISA’s follow-up alert, attackers abused legitimate endpoint management capabilities inside Microsoft …

29 Million Leaked Secrets: How AI Coding Accelerated the Secrets Sprawl Crisis

29 Million Reasons Your Cloud Credentials Are Already Leaked In 2025, developers pushed 28.65 million hardcoded secrets to public GitHub repositories — a 34% jump from the previous year and the largest single-year spike ever recorded. That number comes from GitGuardian’s State of Secrets Sprawl 2026 report, and it barely …

Why Your WAF Isn’t Enough: A Practical Guide to RASP in Cloud-Native Environments

Your WAF blocks thousands of requests a day. Some are real attacks. Most aren’t. And the ones that actually matter — the SQL injection that slips past a pattern matcher, the deserialization exploit that doesn’t match any known signature — sail right through. Runtime Application Self-Protection (RASP) sits inside your …

Editorial Pipeline Validation Test — April 2026

Automated Publishing Test This is a test article to validate the editorial pipeline. Published on 2026-04-03 at 21:17 UTC. Why This Test Exists After a full audit of the cron job environment, all publishing endpoints needed validation. Conclusion If you are reading this, the pipeline is working correctly. This article …

Secret Sprawl in Cloud-Native Infrastructure: How to Audit and Consolidate Before It Breaks You

Most cloud-native teams don’t have a secrets management problem. They have a secrets sprawl problem. The API key lives in a Kubernetes Secret, the database password is in a CI/CD variable, the TLS certificate renewal is somewhere in Terraform state, and nobody is quite sure where the staging credentials ended …

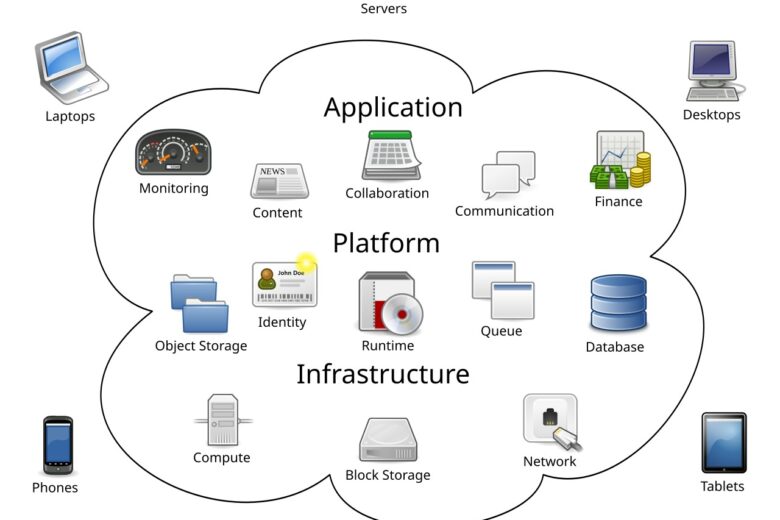

Zero Trust for Cloud Workloads Starts With Identity, Not the Network

Zero Trust for Cloud Workloads Starts With Identity, Not the Network Most cloud breaches no longer begin with someone “breaking through the perimeter.” They begin with a token, a role, a service account, or a workload identity that already has the right to be there. That is why zero trust …

Approval Gates for High-Risk AI Agent Actions: How to Add Human Oversight Without Re-creating Ticket Hell

Approval Gates for High-Risk AI Agent Actions: How to Add Human Oversight Without Re-creating Ticket Hell AI agents are already reading logs, opening pull requests, querying production systems, and triggering infrastructure changes. The real problem is not whether the model can reason. It is whether the surrounding control plane knows …

Service Account Sprawl in AI Platforms: How to Shrink Machine Identity Blast Radius Without Slowing Delivery

Service Account Sprawl in AI Platforms: How to Shrink Machine Identity Blast Radius Without Slowing Delivery AI platforms rarely fail because the model is too clever. They fail because the plumbing around the model is too permissive. A retrieval worker gets broad storage access, an evaluation pipeline keeps a long-lived …

Permission Boundaries for AI Agent Runners: How to Contain Automation Without Breaking Delivery

Permission Boundaries for AI Agent Runners: How to Contain Automation Without Breaking Delivery Excerpt: AI agent runners do not need standing admin rights to be useful. A practical containment model combines short-lived identity, hard permission ceilings, scoped workflow tokens, and namespace-level RBAC so teams can ship automation without giving every …